|

"It's high time UN agencies and the mainstream media acknowledge the true scale of global poverty and engage in a long overdue public debate on how ambitious and transformative the international development agenda really is."

As the star-studded endorsements and media hype surrounding the all-pervasive Global Goals campaign begins to subside, a very different truth is beginning to emerge about this latest attempt by the international community to end poverty and create an ecologically viable future. Despite the UNs ambitious claims, all the indications are that the Sustainable Development Goals (SDGs) do not have the potential to free the human race from the tyranny of poverty and want or heal and secure our planet. On the contrary, the new agenda for development fails to address the root causes of todays interconnected global crises, perpetuates a false narrative about poverty reduction, and reinforces an unsustainable economic paradigm that is inherently incapable of reducing the true scale of human deprivation by 2030.

If taken at face value, it may seem irresponsible for anyone to dismiss the broad vision and prime objective of the SDGs to end poverty in all its forms everywhere if only because it presents a valuable opportunity to improve intergovernmental cooperation and focus both political and public attention on pressing global issues. As Share The Worlds Resources have outlined in a recent report, however, there are many reasons to question not only the targets themselves, but the entire sustainable development initiative and the political-economic context within which it will be implemented.

For example, one of the key concerns that emerged from the Financing for Development talks that accompanied the SDGs negotiations was whether governments will be able to raise and redistribute the huge sums of money needed to meet the goals especially given that developing countries face an estimated annual gap of $2.5 trillion in SDG-relevant sectors. Even though levels of international aid still fall far short of the 0.7% of GDP that donor countries have repeatedly pledged for more than 45 years, governments attending the financing talks failed to agree any concrete measures for redistributing more of the worlds highly concentrated wealth to protect the most vulnerable people.

Instead of agreeing to provide significantly more funding for development, donor governments pushed for countries in the Global South to take greater responsibility for mobilising finances domestically. At the same time, they effectively refused to implement any of the urgent measures that civil society has long been calling for to prevent illicit financial flows, tackle tax avoidance or restructure external debts measures that could mobilise many billions of dollars in additional revenue each year for low-income countries. Until these critical issues are addressed, foreign aid will continue to be dwarfed by the net flow of financial resources from the Global South to the North, which suggests that in reality the populations of (resource rich) low-income nations continue to finance the development of rich nations rather than the other way around.

Unwarranted importance was also placed on scaling up private-public partnerships as a way of raising finance, which is a measure that scores of campaigners and civil society organisations argue has established a corporate development agenda that will benefit businesses far more than those living in extreme poverty. As summarised in a joint statement released by numerous civil society groups during the financing for development negotiations, [the] emphasis on private financing and the role of transnational corporations will further weaken public policy space [for] governments and fails to address the unfinished business of regulating the financial sector despite the extreme and intergenerational poverty created by the global crisis.

Another major critique widely voiced by environmentalists is that the SDGs encourage governments to maintain their obsession with putting economic growth before pressing social and environmental concerns. In particular, SDG 8 is entirely devoted to the promotion of sustained, inclusive and sustainable economic growth, even though there is now ample evidence to suggest that relying on the trickle-down of global economic growth is not an effective way to end poverty. Many campaigners have cited detailed projections by David Woodward (based on optimistic assumptions about future rates of global economic growth), demonstrating that it would take at least 100 years to eradicate poverty at the $1.25-a-day level and twice as long at the more appropriate $5-a-day measure of poverty.

Far from promoting a truly ecological agenda for development, the SDGs reflect the widely recognised and profound contradiction between the pursuit of economic growth and the very notion of sustainability. Not a single country has managed to decouple economic growth from environmental stress and pollution, and achieving any significant level of decoupling remains highly unlikely in the foreseeable future. Indeed, evidence suggests that accelerating economic growth in order to speed up poverty reduction will result in a rise in global carbon emissions that would wipe out any possibility of keeping climate change to within the acceptable margin of a two degrees centigrade increase.

Do the poor count?

However much we would like to believe that governments are on track to end poverty by 2030, a more detailed examination of the available data shows that the received wisdom about our economic progress is largely based on misdirection and exaggeration. According to official UN statistics, there has been a steep drop in global poverty levels over the past 25 years. In 1990, around half of the developing world reportedly lived on less than $1.25-a-day a figure that reduced significantly to 14% by 2015. According to new data just released by the World Bank, these numbers have diminished even more rapidly in recent years with as little as 10% of the worlds population now living in poverty.

However, there are serious concerns around how changes to the way poverty is calculated have contributed to the illusion that poverty significantly reduced as a result of the Millennium Development Goals. Most significantly, the baseline year for measuring progress was shifted back to 1990 in order to include all the poverty reduction that took place (mainly in China) well before the Millennium Campaign even began. On more than one occasion, changes to the way the poverty line was calculated meant that hundreds of millions of people were subtracted from the MDGs poverty statistics overnight.

The World Banks definition of what constitutes extreme poverty is also widely regarded by economists as highly problematic. According to the Banks latest revision, this all-important measure is now based on an international poverty line of $1.90-a-day (previously $1.25-a-day). This exceedingly low and highly contentious poverty threshold reflects how much $1.90 can purchase in the USA but not in a low-income country like Malawi or Madagascar, as is often believed. Its clear that meeting even the most basic human needs for access to food, water and shelter let alone paying for basic medical services would be impossible to achieve in the United States with such little money. In comparison, the official poverty line for people who live in the United States is set at the substantially higher rate of around $16-a-day.

At the very least, this behoves the World Bank and the SDGs to adopt a morally appropriate dollar-a-day poverty line that accurately reflects a minimum financial requirement for human survival. This is a view shared by the United Nations Conference on Trade and Development (UNCTAD) who argue that only by using a higher threshold of $5-a-day would it be possible to fulfil the right to a standard of living adequate for

health and well-being as set out in Article 25 of the Universal Declaration of Human Rights more than 65 years ago. According to World Bank statistics, poverty at this slightly higher level of income has consistently increased between 1981 and 2010, rising from approximately 3.3 billion to almost 4.2 billion over that period.

If the Millennium Campaign had used this more appropriate poverty threshold, MDG-1 would clearly not have been met: rather than halving the number of people living without sufficient means for survival, there are 14% more people living in $5-a-day poverty now than in 1990. As ActionAid and others rightly suggest, however, a $10-a-day benchmark may be a far more a realistic measure of poverty when comparing lifestyles in rich and poor countries, which would mean that an alarming 5.2 billion people live still in poverty today.

There can be little doubt that the mainstream narrative about how global poverty is being dramatically reduced distracts from the need to address its structural causes and diffuses public outrage at what is, in reality, a worsening crisis of epic proportions one that demands a far more urgent response from governments than the SDGs can deliver. At the very least, adopting a more realistic international poverty line would transform our understanding of the magnitude and persistence of poverty in the world, and spark a long overdue debate on how ambitious and transformative the international development agenda really is.

Ending the global emergency of avoidable deaths

While such critiques of UN poverty statistics are necessary to highlight the truth about global poverty levels, this still doesnt fully illustrate what life-threatening deprivation means in human terms, especially for those of us living in affluent countries who have little or no contact with the worlds poor. World Bank figures conceal a disturbing fact about what it really means to forgo access to lifes essentials: according to calculations by Dr Gideon Polya, over 17 million avoidable deaths occur every year as a consequence of life-threatening deprivation, mainly in low-income countries. As the term suggests, these preventable deaths occur simply because millions of people live in conditions of extreme deprivation and therefore cannot afford access to the essential goods and services that people in wealthier countries have long taken for granted.

The extent of this ongoing tragedy cannot be overstated when approximately 46,500 lives are needlessly wasted every day innocent men, women and children who might otherwise have contributed to the cultural and economic development of the world in unimaginable ways. This annual preventable death rate far outweighs the fatalities from any other single event in history since the Second World War, and around half of those affected are young children. Given todays technological advancements and humanitys combined available wealth of $263 trillion, its perhaps no exaggeration to suggest that the magnitude of these avoidable deaths is tantamount to a global genocide or holocaust.

What, then, should be our reaction to the sheer extent of life-threatening deprivation in the world, given that our combined efforts to meet urgent human needs as expressed by the actions of our elected governments are tragically inadequate on a global scale? Its surely futile to direct further policy proposals or alternative ideas to the worlds governments, who are failing to enact the emergency measures and far-reaching structural reforms that are necessary to end extreme poverty within an immediate time-frame. Instead, civil society groups and engaged citizens should adopt a strategy for global transformation based on solidarity with the worlds poor and a united demand for governments to radically reorder their distorted priorities.

STWRs founder Mohammed Mesbahi has proposed such a strategy for redirecting public attention towards the shameful injustice of this growing humanitarian crisis, based on the need for governments to finally uphold the long-agreed entitlements set out in Article 25 of the Universal Declaration of Human Rights as their leading concern in the period ahead. As Mesbahi explains, the time has come for millions of citizens in every country to collectively demand the universal realisation of these basic rights for adequate food, housing, healthcare and social security for all until governments significantly reform the global economic system to address the root causes of hunger and needless poverty-related deaths.

With over 70% of the global population struggling to live on less than $10 per day, there is no doubt that a common cause for guaranteeing basic socio-economic rights across the world could bring together many millions of people in different continents on a common platform for transformative change. If these public protests can become the subject of mainstream political and media discussions, people from all walks of life may soon be persuaded to join in - including those who have never demonstrated before in the richest nations, along with the poorest citizens in low-income countries.

Needless to say, galvanising an informed public opinion the world over is a formidable challenge given the false mainstream narrative on poverty reduction and a general lack of popular awareness within affluent society. But without a collective worldwide awakening to the injustice of widespread poverty amidst excessive wealth inequalities, it may remain impossible to overcome vested interests and the political inertia of governments. The responsibility for change falls squarely on the shoulders of us all - ordinary engaged citizens - to march on the streets in enormous numbers and forge a formidable public voice in favour of ending extreme human deprivation on the basis of an international emergency.

This article is drawn from a report entitled: Beyond the Sustainable Development Goals: uncovering the truth about global poverty and demanding the universal realisation of Article 25

Image credit: David Sandison/Save The Children

A Perspective on Uncertainty and Climate Science

Marcia Wyatt

This article was originally published in

Climate Etc., 11 October 2015

REPRINTED WITH PERMISSION

This past summer I was asked to give a presentation on science and ethics. The person who asked me was motivated by the Popes encyclical, the comments regarding climate change.

The group to which I was to present its members most interested and well-informed about climate, yet also confused by the strong opinions of dignitaries and luminaries, such as the Pope wanted a climate scientists perspective. I could not help them with their ethical outlook, but I realized what people really need is not the tit-for-tat, back-and-forth endless debate, with each side being right; they needed to understand that we scientists really dont know what climate is doing or will do! No one does. We only have degrees of uncertainty. Thus, the presentation evolved, with the originally requested topic modified to discuss my perspective on the uncertainties in climate science. After giving the talk, I realized there could be many versions of such a presentation, with different levels of detail and scientific background. I would like this information to be communicated to the public, as well as to college and high-school students. In the past, I could ignore the dissemination of misleading information to the general public. No longer can I. The stakes are too high; the consequences too dire.

Uncertainty in Climate Science

The complete powerpoint presentation can be downloaded here: Uncertainty in Climate Science

Introduction

Word around town is that science is truth. Sorry to damp the zeal, but science is NOT truth. By definition, science equates to varying degrees of uncertainty, with hypotheses and theories bookending the uncertainty spectrum to some, a rather boring outlook. Hypotheses suggested explanations for how things work, and based upon observed evidence, offering potential prediction of phenomena whose correlative relationships may be causal must be both testable and falsifiable. A hypothesis cannot be proven to be true; it can only be proved false. For a hypothesis to be elevated to theory a rare and significant promotion the hypothesis must survive multiple replications of results with a wide set of data, and it must be tested under a variety of circumstances. Even then, while uncertainty of a theory is minimized; it is never zero. Hence, science is the constant process of trying to figure out how things might work. To a scientist, this is exhilarating. To the non-scientist wanting a solid answer, not so much!

Well, this is all relatively bad news for those of us who study climate. Climate, by nature, does not lend itself well to being tested. We cant isolate its parts and study them in a lab. We cant condense decades and millennia into hours and days in order to extract multiple data points and long records. Intertwined and multiple parts of the climate system render its evaluation stymied by the endless unknown unknowns! So what do we do? We seek out proxy data riddled with caveats. We invoke computer climate models riddled with caveats. No matter which way we turn, we are faced with caveats, but its the best weve got. Sometimes we get so used to working within these constraints imposed upon us, we begin to lose sight of our assumptions, and the attendant biases, caveats, and uncertainties laced throughout our research format. In time, it is not difficult to see how we come to believe the little fantasy world we have made for ourselves in attempt to make sense of natures vast stomping grounds. And when it is demanded of us to stop equivocating, to make the discussion short and sweet, packaging into sound bites the complexities of 4.6 billion years perspective on climate and how its changing character of today differs from any time past and how we humans and other earthly creatures will survive an onslaught that, by human perception, appears unprecedented and unendurable; What can we do!!!! Politics enters the stage, followed closely by celebrities and media. Messages are surgically edited to be woven into stories far more captivating than those told by the equivocating egg-heads; and photographers, accompanied by narrators with scholarly accents and compelling rhetoric, come in to educate the public. And the public find no choice but to believe. Uncertainty is forgotten, actually no, it is abandoned. Uncertainty is not for the impatient. Good intentions pave the path forward. So where does that leave us? How does one make policy decisions based on science, with uncertaintys role demoted to nuisance status?

It might be of interest to know that historically, skepticism has fueled forward movement of scientific discovery. Uncertainty has always motivated inquiry. Conversely, certainty has squelched it. Certainty entrenches paradigms. Examples dot history of paradigms kept on life support with increasingly complicated constructs to explain phenomena or occurrences inconsistent with hypothesized dynamics and behavior the 1600-year-long geocentric model being a most vivid example. Upending of faulty paradigms often relies on evolution of technology. New evidence reveals surprises those unknown unknowns. Ironically, those most educated in a field often are not the ones in history to have revolutionized thought. Lay persons and scientists of different specialties often were the ones who saw what was hidden from the hardened mental filters of those overly invested in a paradigms survival. Skepticism has gotten a bad rap in recent years. Instead, it should be embraced. It is skepticism not conformity that provides the checks and balances to humans tendency to see the expected.

How does one make good decisions in context of uncertainty? One must gather good evidence not hearsay, not sound bites, nor consensus. Good evidence can be garnered only through understanding how conclusions are reached the methodology and data used to construct them. This is not easy, but just accepting what others say their filtered conclusions, even those of respected scientists or trusted dignitaries not investigating the scientific process employed in generating a conclusion, and not exploring alternate possible explanations for observed phenomena, destines its victims to the unintended consequences.

Scientists do agree: Temperatures have increased since 1850; CO2 has too. CO2 is an infrared warmer. With no positive or negative feedback responses, a doubling of it will lead to an approximate 1.1ºC temperature increase. Disagreement erupts over just how much temperature has risen; what part is due to CO2; what part to land-use changes; what part due to natural or intrinsic influences. How well do models represent climate; what is climates sensitivity; are the data reliable? Is there really a problem? Is it a problem that can be solved with proposed solutions? And what are potential consequences of proposed solutions? It is said to be certain, to be settled science. Really!?!

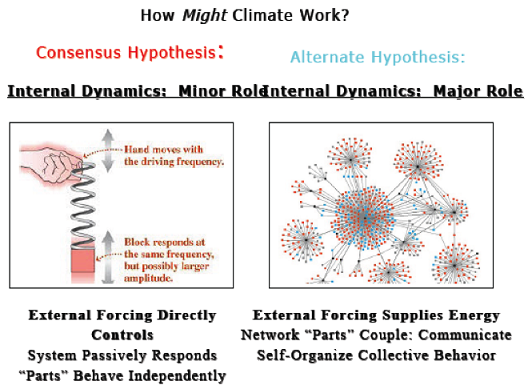

1. Hypotheses overview: More than one hypothesis can explain observed behavior.

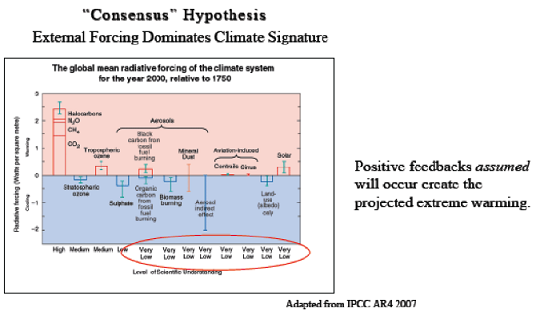

Two general and contrasting views exist on climate behavior. One view is the consensus hypothesis, where external forcing both natural and anthropogenic dominates climate behavior (climate change) a modification of the former anthropogenic global warming (AGW) hypothesis. The contrasting view allows a greater role for internally generated dynamics, especially on decadal-plus time scales.

According to the external-forcing view, parts of a system operate relatively independently; the system is prone to instability, is not resilient, and, with continued anthropogenic greenhouse-gas-emission increases, is projected to result in catastrophic climatic changes.

In contrast, the intrinsic-dynamics view envisions network-behavior dominating climate behavior, where parts of the ocean, ice, and atmosphere sub-systems self-organize over decadal-plus time scales, interacting with one another, and thereby initiating intra-network communication, conveying resilience and relative stability to the climate system.

The external forcing hypothesis is based on strong understanding of greenhouse-gas forcing, but low-to-very-low levels of understanding of other external forcings clouds, aerosols, solar influence, for examples. Extreme increases in projected temperatures rely on incomplete understanding of reinforcing consequences of the original CO2-induced warming, i.e. positive feedbacks. Little is understood about potential damping mechanisms e.g. clouds, aerosols, atmospheric convection, and precipitation. Likewise, little is fully understood about, or attributed to, intrinsic dynamics. None of these weaknesses guarantees this hypothesis is wrong, but the uncertainties involved are striking. More striking is that the hypothesis is not testable. It cannot be falsified. The alternate hypothesis, the network hypothesis, is rooted in observation, among a variety of indices. Mechanisms have been elucidated as possible dynamics underlying climate-signal evolution. Uncertainties underlie this hypothesis, as well. Yet, its strength lies on observations. They are consistent with the hypothesis, and in time years to decades this hypothesis is testable and falsifiable.

2. Models: Hypotheses, themselves, models are good tools, yet not reality.

Computer climate models complex, incomplete, and flawed have failed to capture the temporal and spatial signatures of observed climate behavior. Great tools, they are, but they, themselves, are hypotheses. Each one is an experiment, of sorts. A climate model can be thought of as a script, taking orders from computer programmers in the form of complex mathematical equations. Increased complexity of input is expensive and time-consuming. Hence, simplifying is required. Lost is the ability to capture details of climate phenomena too large or too complex for the model-grids scale of resolution. To compensate, some assumed-to-be unimportant phenomena are omitted entirely; other phenomena are parameterized, meaning simple empirical formulas are used to represent the collection of phenomena as best as understood, with adjustable coefficients inserted thermostats, of sorts, tweakable to fit observations. This does not mean output is necessarily wrong, but it does mean uncertainty looms in procedure and in results!!! A major problem arises when model outputs are considered to be reality.

3. Data: Ah yes, the data

But the data!!! exclaimed the woman, hurriedly removing herself from my presence in undisguised disgust. All I said was, climate is complex.

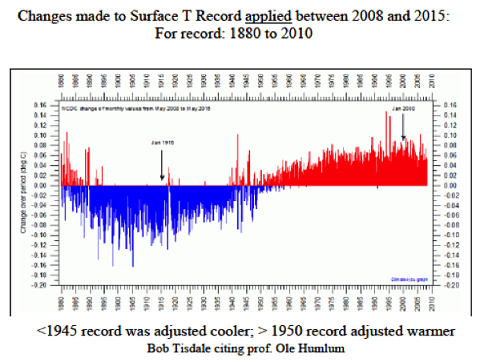

This is an unfortunate tale. Few realize: Data records are a mess. Thats the short version. The long one is filled with justifications and fixes. In short, climate is long-term behavior and we dont have long-term records. The longest instrument temperature records we have are patchwork compilations of temperature readings gathered from various and evolving technologies and varying degrees of instrumental precision. Confounding consistency are continual changes in measuring distributions; numbers of reporting stations; extent of coverage; and required conditions of the measuring stations; etc. Assumptions rule the temperature record. When we see data that make no sense, we speculate why. If the instrument, technology, conditions, and the like seem sketchy, we document such and assume what climate conditions likely existed and therefore what temperatures should have been recorded, based on a variety of guidelines, and we change the recorded temperatures to what we think it maybe really was

.

The motivation for adjusting data is honest; at least we hope it is. A recent increase in the frequency of data adjustments in temperature trends has raised red flags, with findings of undocumented changes, questionable extrapolation practices, and computer-initiated homogenization changes made according to assumptions. Some argue that where assumptions might have trumped accuracy, the number of errors is so small as to not present a problem. Yet, it seems yesterdays data sets showed variability over the years. Now the warm 1930s and 1940s have been erased, relegated to mythology. We shiver as we are told of the warmest years on record by hundredths of a degree, and with minor data re-calculations, pauses in observed temperature trends disappear overnight, and we are told to accept this, and we do, in light of all the uncertainties. Can this be???

There is more than one way to evaluate temperature. Four categories commonly used include surface thermometers, satellite-retrieved measurements, balloon-mounted instrumentation, and proxy data. None of these temperature trends match the modeled trends. Quantitatively, among the four temperature records, while their trends are analogous to one another, the magnitudes of their trends are not. Surface temperature-trends are steeper than satellite-retrieved and balloon-based temperatures; while satellite and balloon temperatures are similar to one another. Tree-rings buck the trend further, with one of cooling since 1940, most strongly since the 1960s. Tree-rings, depending on tree species and location, capture a variety of information e.g. moisture content, sun exposure, and also temperatures, generally maximum ones. On the other hand, much of the increase observed in surface instrumental land-temperature increases can be attributed mostly to increases in minimum temperatures, which, when averaged with their daily maximum counterparts, reflect increase. Satellite and balloon instrumentation infers temperature of the lower troposphere, where greenhouse-gas warming is supposed to be greater than surface warming. Thus, all methods differ in where and what they measure. All temperature data are further enhanced by extrapolations of neighboring stations, some up to 1200 km away, as in the Arctic the region known to host the widest extremes in temperature on multidecadal timescales. Sea-surface-temperature measuring methods have their own story. And then we model data or reanalysis products to infill missing data points. And sometimes we mix modeled data with observational data, subtracting one from the other, in order to evaluate climate. But right or wrong, accurate or inaccurate, this is what we have. Judgment on such is not the point here. The point is uncertainty bias is potentially injected at every step of settled science.

Archival records speak to hot intervals The Arctic Ocean is warming up, icebergs are growing scarcer; in some places the seals are finding the water too hot

a radical change in climate conditions and hitherto unheard-of temperatures in the Arctic

well-known glaciers have entirely disappeared (Washington Post: November 2, 1922). And in 1933, the New York Times: America in longest warm spell since 1776

a 25-year rise. And again in 1947, the New York Times: A mysterious warming of the climate is slowly manifesting itself in the Arctic, engendering a serious international problem

And history tells us of cold July 18, 1970, New York Times: The United States and the Soviet Union are mounting large-scale investigations to determine why the Arctic climate is becoming more frigid, why parts of the Arctic sea ice have recently become ominously thicker and whether the extent of that ice cover contributes to the onset of ice ages. Fortune Magazine in February of 1974 warns of a

very important climate change going on right now

not merely something of academic interest

.if it continues, will affect the whole human occupation of the earth

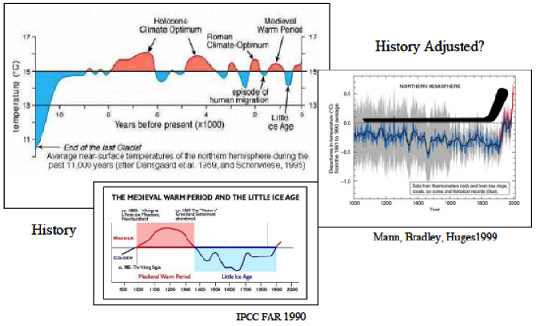

A longer view of climate, one supported by thousands of papers pre-dating the 1990s, showed pronounced variability and warm intervals equal to those of today, the most recent of which was about a thousand years ago. Studies in the late 1990s removed that variability. And while the science behind the historical climate revisions has been challenged and shown flawed, the public perception of past uniformity lingers.

5. Consensus: Not a measure of scientific validity.

Science has always been a story of revision. Consensus-based paradigms come and go. The geocentric model endured for 1600 years. But consensus plays no role in scientific validity. Yet, one can understand their evolution. Limitations of technology, egos, hardened mental filters, and the like can contribute to a flawed paradigms endurance. Typically paradigms are perpetuated by the best educated. Those not immersed in the field and not financially tied to the discipline were the one who saw through a different filter and revolutionized a science that was not necessarily their area of expertise.

Sometimes scientists find themselves split between being scientists and being useful to society. Most have read the words of scientist Stephen Schneider, now deceased, but once a scientist at NCAR: We are not just scientists, but human beings

We have to offer up scary scenarios, make simplified, dramatic statements, and make little mention of any doubts we might have

And then that of an NCAR scientist (to remain unnamed) who spoke to a class of mine in 2007, We should not talk to the politicians about our doubt or the uncertainties of our model output; we should keep that among ourselves, when we are talking to other scientists. It is our moral duty to express certainty. Yes, scientists are human

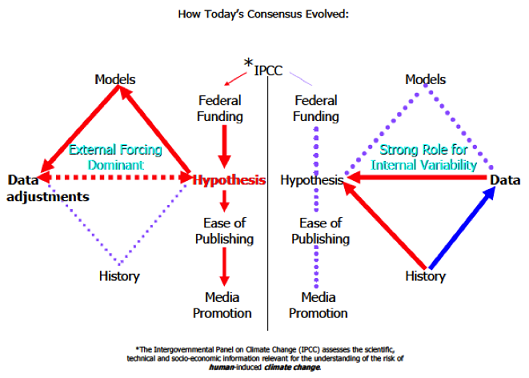

The diagram that follows traces my view of how todays consensus evolved.

The left side shows the IPCC* conclusions and goals feed the federal funding for grants given to scientists to study, specifically, the effect of anthropogenic CO2 emissions on climate behavior (AGW: anthropogenic global warming). This is the external-forcing-dominant paradigm. Thus, the funding feeds the AGW hypothesis. In turn, the hypothesis inspires the computer-climate-model designs. The modeled output, in turn, has led slowly to the observed data being adjusted, as the observed data records tend to be inconsistent with theory. The data, while fed by models and hypothesis, in turn, feed the hypothesis. Studies supporting the consensus hypothesis are easily published, review processes more streamlined and lenient than with studies whose conclusions do not support the hypothesis or are neutral. This all dove tails with media promotion, typically highlighting only AGWsupporting conclusions and not the methodology and data used to derive the conclusion, and not the authors noted limitations and weaknesses of the study and its conclusions.

The right side shows the fate of a non-AGW hypothesis: The IPCC does not fuel funding for the hypotheses that are not AGW, those that tend to argue for a strong role for internally generated dynamics (intrinsic variability). In the case of an alternate hypothesis, the data inspire the hypotheses. The historical data feed the hypothesis. Modeling with the atmosphereocean coupled general circulation models (AOGCMs) used for IPCC-related research do not support these hypotheses; it is assumed that critical dynamics are either absent or poorly represented in the AOGCMs.

White asterisks: modified and modeled data. .Red dotted line: no correlation. Blue arrow: arrow points from end member that supports the other. .Red arrow: arrow points to end member being driven by other member. Red dashed double arrow means the two end members are consistent or supportive of one another.

6. Perceptions/Reality: Things arent always as they seem.

Photo-journalism and social media have enhanced our understanding of the world. They bring to our eyes, and our hearts, the enormity of global changes that imperil our future. This eloquent statement, said to me recently by an acquaintance, was followed by an attempt to boost the credibility of her words And Im a Republican! Yes, I understand the political framing, much as I rebel against it as it has no place in science but that is todays reality. And she was on to something; indeed, photo-journalism and the power of social networking have scripted our perceptions and redesigned reality for our consumption. But, behind every photograph of a stranded polar bear, of mountain glaciers shrinking, of drought-ravaged landscapes, of tornado-inflicted devastation, of flooded neighborhoods, of pounding seas and calving glaciers, hurricane-pounded surfs and ice-locked shipping ports, our impulse to assign cause to effect confounds our ability to reason, to see the story behind the sensation.

For examples: Polar-bear populations have rebounded, especially since the hunting rules were changed in the 1950s. The bears have redistributed their populations within the Arctic, and for those in regions of greater ice loss, the white giants have been found to exhibit foraging plasticity i.e. they are changing their diets[1]. In the cases of droughts, hurricanes, weather events, etc many exhibit decadal to multidecadal cyclical behavior, with human population shifts further modifying the trends not shown to be due to global warming, but through land-use changes, through changes in perception about the events due to where population centers have migrated, and to greater exposure due to 24/7 news and a camera phone in every pocket. Calving glaciers are calving because they are growing; retreating glaciers, especially mountain glaciers, are retreating for a variety of reasons while rising temperatures certainly play a role in some cases, little evidence supports global warming as the main culprit. In fact, mountain glaciers are really bad thermometers adjacent glaciers may exhibit opposing trends, with one advancing and the other retreating. Much of the retreat witnessed in glaciers occurred long before carbon-dioxide emissions were prominent. And precipitation patterns, winds, solar-insolation patterns are among factors dominating the behavior of these alpine features. Sea-level-rise is occurring at a rate about 2mm/year, depending on the study cited. A cyclical component underlies a linear one. Complications in measuring and comparing current to historical measurements confound clear assessments. Greenland and Antarctic ice sheets, if melted, would contribute the most severe consequences to rising water, but dynamics are complex and our understanding of them not at all imbued with certainty.

Scientists compound the misperceptions at times. The famous study by Parmesan et al (1999)[2], associating warming with the poleward-migration patterns of butterflies in northern Europe is one such example. A shift has been documented, but a conclusive reason was far from established, a direct link to temperature not forthcoming. But the conclusion was promoted anyway. The uncertainties laid in the inconvenient about third of the 35 species studied moved north with warming temperatures; approximately two-thirds expanded north, not abandoning their southern bounds. A small percentage actually shifted southward with increasing temperatures. The list is long of these ambiguities in study results none pliable within sound bites, herein muting the message of uncertainty!

(Possible consequences of global warming an essay: www.wyattonearth.net investigations into climate and geology page)

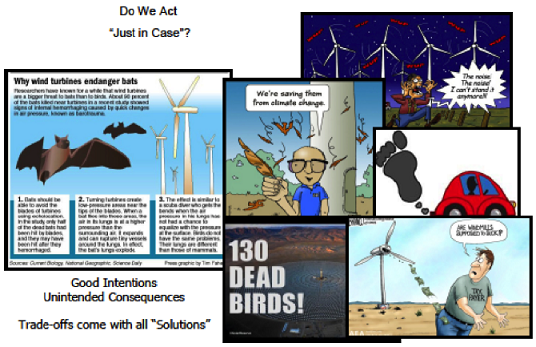

7. Solutions: Everything is a tradeoff. Beware the fix being worse than the problem.

There is the view point that we should just do something, just in case

And argument can be made for this opinion. But arguments can be made against too, many laid out in this text and its accompanying Power Point presentation.

Regulation is one approach toward a solution. Will there be unintended impacts? Economic? Environmental? What countries will comply? CO2 knows no boundaries. And most importantly, what correction in the climate-change trend can be effected? Will our best intentions curtail warming significantly? By some estimates, a 40% decrease of CO2 emissions in the United States, alone, will avert a scant 0.016ºC of projected warming by 2050, assuming a climate sensitivity of 2ºC.[3] And if climate sensitivity is assumed larger, at the high end of estimates, say 4.5ºC, then the temperature-increase averted by 2050 will be 0.025ºC, and by 2100, 0.056ºC. Bring all industrialized nations under regulatory control, and if the collective reduction of emissions is 20%, with an assumed climate sensitivity mid-range, at 3ºC, the temperature-increase thwarted is estimated at 0.025ºC; by 2100, 0.045ºC. Is the science settled enough to justify the drastic economic adjustments required for the projected solution realized? What level of uncertainty is acceptable?

And seeking energy resources that provide beneficial alternates is not at all a bad thing, for a variety of reasons, not just environmental. But caution is warranted, as with good intentions, there is always a trade-off, usually hidden behind the good feeling of doing something. For example, wind turbines: Just a few months ago, the German medical community requested a halt to further turbine installation until the health impacts of turbine-associated low-frequency noise can be further studied. Perhaps stories of dying sheep and goats due to sleep deprivation and reported human problems of headaches, dizziness, nausea and insomnia associated with noise from the whapping blades hold merit. Birds and bats are casualties hundreds of thousands each year, with trickle-down consequences on insect populations (increasing mosquitoes, for one). Costs and pollution of associated fossil-fuel use are a dirty secret, a consequence of on-demand backup requirements, consequent of winds inconsistent presence. Local weather changes result from turbine-altered wind patterns. And solar solutions are not without issue. Manufacturing-related leakage of SF6 and NF3 greenhouse gases 23,000 and 17,000 times as potent as CO2; reduced albedo (reflectivity) in desert areas due to acreage covered in black panels; and birds vaporizing in flight over hot panels. Clean trucks, newer than five-years-old, in Europe, are associated with unexpected increases (34%) in black carbon emissions soot a warming agent.

These points are a small sampling of the many documented issues of re-designing our energy use. Not that re-structuring would not be worthwhile to strive toward, but we have talked about this goal for at least forty years and little progress has been made. It must be realized that every source of generating and transmitting energy comes with trade-offs. None are without flaws and detriments.

Deciding on action is difficult, a personal opinion. Understanding the level of scientific certainty of the proposed problem is one step toward that decision. How settled is the science? How much uncertainty hides behind the loud voices and compelling photographs?

References

[1] Gormezano and Rockwell (2013): What to eat now? Shifts in polar bear diet during the ice-free season in western Hudson Bay; Ecology and Evolution 3(10):3509-3523; doi:10.1002/ece3.740

[2] Parmesan et al. (1999): Poleward shifts in geographical ranges of butterfly species associated with regional warming; Nature 399, 579-583; doi:10.1038/21181

[3] meaning that climate is assumed to behave in such a way, that for a doubling of CO2, temperatures will increase 2ºC

ABOUT THE AUTHOR

Marcia Wyatt is a geologist who did her doctoral thesis work on climate variability. For more information, visit her website on the

Stadium-Wave.

|

For Sustainable Energy, Choose Nuclear

S. Fred Singer

This article was originally published in

American Thinker, 30 September 2015

REPRINTED WITH PERMISSION

Many believe that wind and solar energy are essential, when the world runs out of non-renewable fossil fuels. They also believe that wind and solar are unique in providing energy thats carbon-free, inexhaustible, and essentially without cost. However, a closer look shows that all three special features are based on illusions and wishful thinking. Nuclear may be the preferred energy source for the long-range future, but it is being downgraded politically.

|

Fossil fuels, coal, oil, and natural gas, are really solar energy stored up over millions of years of geologic history. Discovery and exploitation of these fuels has made possible the Industrial Revolution of the past three centuries, with huge advances in the living standard of an exploding global population, and advances in science that have led to the development of sustainable, non-fossil-based sources of energy -- assuring availability of vital energy supplies far into the future.

Energy based on nuclear fission has many of the same advantages and none of the disadvantages of solar and wind: it emits no carbon dioxide (CO2) and is practically inexhaustible. Nuclear does have special problems; but these are mostly based on irrational fears.

A major problem for solar/wind is intermittency -- while nuclear reactors operate best supplying reliable, steady base-load power. Intermittency can be partially overcome by providing costly stand-by power, at least partly from fossil fuels. But nuclear also has special problems (like the care and disposal of spent fuel) that raise its cost -- and inevitably lead to more emission of CO2. Such special problems make any cost comparison with solar/wind rather difficult and also somewhat arbitrary.

At first glance, neither solar/wind or nuclear generate electric power without emitting the greenhouse (GH) gas carbon dioxide. But this simple argument is misleading. All three sources of energy require the manufacture of equipment, and that usually involves some CO2 emission; a rough measure is given by comparing the relative capital costs of site preparation and construction, as well as of operation and maintenance (O&M). Caution must be exercised here: a nuclear plant has a much longer useful life (up to 60 years, and beyond). We dont have much experience yet with corresponding lifetimes and O&M costs for solar and wind, but they are likely to be higher than for nuclear reactors -- excepting for the preparation cost of nuclear reactor fuel.

There is general agreement that both solar and wind energy are truly inexhaustible and satisfy the principle of sustainability. However, both are very dilute and require large land areas -- as well as special favorable locations -- and subsequent transmission of electric power. On the other hand, nuclear power plants have only a tiny footprint and can be placed at many more locations, provided there is cooling water nearby.

Nuclear Energy is Sustainable

Surprisingly, nuclear energy is also inexhaustible -- for all practical purposes. Uranium is not in short supply, as many assume; this is true only for high-grade ores, the only ones considered worth mining these days. Beyond lowergrade ores, there is uranium in granites -- and an essentially infinite but currently uneconomic amount in the worlds oceans.

Only 0.7% of natural uranium is in the form of the fissionable U-235 isotope; the remainder is inert U-238. For use in power reactors, the uranium fuel must be enriched in U-235 to at least to the 2%-level; for weapons, the required level rises to about 80%.

Currently, uranium is cheap enough to justify once-through use in light-water power reactors; the fuel rods are replaced after a fraction of the U-235 is burnt up; actually, some fissionable plutonium (Pu) is also created (from U-238) and generates heat -- and electric power. The spent fuel is mostly U-238, plus radioactive fission products with lifetimes measured in centuries, and small amounts of long-lived radio-active Pu isotopes and other nasty heavy elements.

But as every nuclear engineer knows, the spent fuel is not waste but constitutes an important potential resource. The inert U-238 can be transformed into valuable fissionable reactor fuel in breeder reactors -- enlarging the useful uranium resource by a factor of about 100. And beyond uranium ores, there are vast quantities of thorium ores that can also yield fissionable material for reactor fuel. It is mainly a matter of reactor design -- not wasting any neutrons used for breeding.

And we havent even mentioned nuclear fusion, the energy source that powers our Sun. Fusion has been the holy grail of plasma physicists, who after decades of research have not yet been successful in building a stable fusion reactor; the hydrogen bomb is a version of unstable fusion. I hate to admit this: We may not need fusion reactors at all; uranium fission works just fine. However, a hybrid fusion-fission design might make sense; it would use pulsed fusion as a source of neutrons for breeding inert uranium or thorium into fissionable material for reactor fuel.

So why are we not moving full speed ahead with all forms of nuclear, destined to become our ultimate source of energy for generating heat and electricity? Are we wasting precious time and dollars on marginal improvements to solar photovoltaic and wind technology? What seems to be holding back nuclear is public concern about safety, proliferation, and disposal of spent fuel.

Safety: It should be noted that there have never been lives lost in commercial nuclear accidents; Chernobyl was a reactor type not commercially used in the West. Proper design is constantly improving safety by reducing the number of valves and pipes, by relying on gravity in inherently safe designs, and by properly training human operators.

Nuclear proliferation: Over past decades, many things have changed. There is no longer a nuclear duopoly of US and USSR. The horses have left the stable: If terrorist North Korea and Iran can build weapons -- and delivery systems -- it may be time to rethink international proliferation policy.

Disposal of spent reactor fuel: I am assured there are no real technical problems; there are even reactor designs (like IFR Integral Fast Reactor) that can eliminate all waste. Reprocessing of spent reactor fuel works just fine, but has been discouraged because of historic concerns with proliferation based on plutonium; but power reactors dont produce plutonium suitable for weapons.

Relative Costs

It is doubtful that future generations will ever enjoy the truly low cost of fossil fuels. In a rational world, low-cost deposits are exploited first and costs rise gradually; this has not been the case for petroleum, mainly for geopolitical reasons, but it is more or less happening with coal, where many low-cost deposits around the world compete with each other.

For wind and solar, technical limits may soon be reached for conversion devices (wind turbines and photovoltaic cells), and a lower cost limit may then be set by O&M costs, by the opportunity cost of the land occupied, and/or by transmission costs of electric power.

For nuclear, there are still many ways to lower cost, widely discussed in technical journals: construction of modular, low-power (~100 megawatt) reactors in factory mass production rather than costly on-site construction of gigawatt reactors. The biggest savings would come from more rapid construction and less delay, involving pre-approval of reactor sites and blanket approval of standardized, mass-produced reactor designs.

Energy Policy

In spite of its obvious advantages, why then is nuclear being downgraded compared to wind and solar? Call me a cynic if you want; but I think the main reason is money. Another reason is the ingrained hatred by Green groups of all things nuclear.

A classic example by now is Solyndra, which managed to waste more than half-a-billion dollars of federal money. In addition to innumerable small projects (that add up to an impressive total), one can cite some major boondoggles: How about the governments third (!) attempt to build a solar power tower? [The first one failed during the Nixon-Carter administrations Project Independence some 40 years ago.]

None of these projects can survive without generous subsidies, feed-in tariffs, and tax breaks. The costs are borne by ordinary ratepayers; for example, electric energy costs in Denmark and Germany, leaders in wind power, are 3-4 times US costs. In the US, the policy disaster has been strictly bipartisan. While George W. Bush disavowed the Kyoto Protocol, he missed the opportunity to phase out and kill subsidies for solar and wind. We are currently witnessing the consequences of this failure.

Update: A portion of the above essay had been submitted as an op-ed by S. Fred Singer and Gerald E. Marsh to The Bridge, a quarterly journal of the National Academy of Engineering.

ABOUT THE AUTHOR

S. Fred Singer is professor emeritus at the University of Virginia and a founding director of the Science & Environmental Policy Project; in 2014, after 25 years, he stepped down as president of SEPP. His specialty is atmospheric and space physics. An expert in remote sensing and satellites, he served as the founding director of the US Weather Satellite Service and, more recently, as vice chair of the US National Advisory Committee on Oceans & Atmosphere. He is an elected Fellow of several scientific societies and a Senior Fellow of the Heartland Institute and the Independent Institute. He co-authored the NY Times best-seller Unstoppable Global Warming: Every 1500 years. In 2007, he founded and has chaired the NIPCC (Nongovernmental International Panel on Climate Change), which has released several scientific reports [See NIPCCreport.org]. For recent writings see American Thinker and also Google Scholar.

|

|Back to TITLE|

Page 1

Page 2

Page 3

Page 4

Page 5

Page 6

Page 7

Page 8

Page 9

Supplement 1

Supplement 2

Supplement 3

Supplement 4

Supplement 5

Supplement 6

PelicanWeb Home Page

|